Docker + ROS: How to listen to ROS nodes in External Docker Containers

Motivation and use case

Working with Pepper robots at the Knowledge Technology Research Group, we have the high-level goal of building Pepper into an interactive demonstration platform. One of the brainstormed requirements is that we want a Pepper robot to be capable of autonomously navigating our office space and gather the employees for a joint lunch break. To approach the mapping and localization problem at hand, we decided on employing the Visual Simultaneous Localization and Mapping [1] algorithm. Instead of implementing the algorithm from scratch, we chose the OpenVSLAM implementation by Sumikura [2] et al, which happens to come with a Dockerfile. Thus, the objective is clear: Get Pepper’s sensor data with ROS (possibly do some data cleaning), then feed the data to the VSLAM algorithm, which is running in it’s Docker container. But getting the two technologies to work hand in hand is only trivial for people who have a deep understanding of both frameworks, which I didn’t initially have. Hence I want to share what took me roughly a day to figure out…

The problem

Looking for how to approach the issue of reading ROS sensor data in Docker containers, I consulted the official documentation. ROS’s documentation regarding Docker [3], only shows us how to listen to ROS nodes/topics when the main roscore command is run inside the Docker container as well. That is not what I wanted though, because all our other projects were already implemented outside of Docker, we only needed Docker for one component: The VSLAM implementation. The documentation regarding Docker’s main ROS image [4] didn’t help me either.

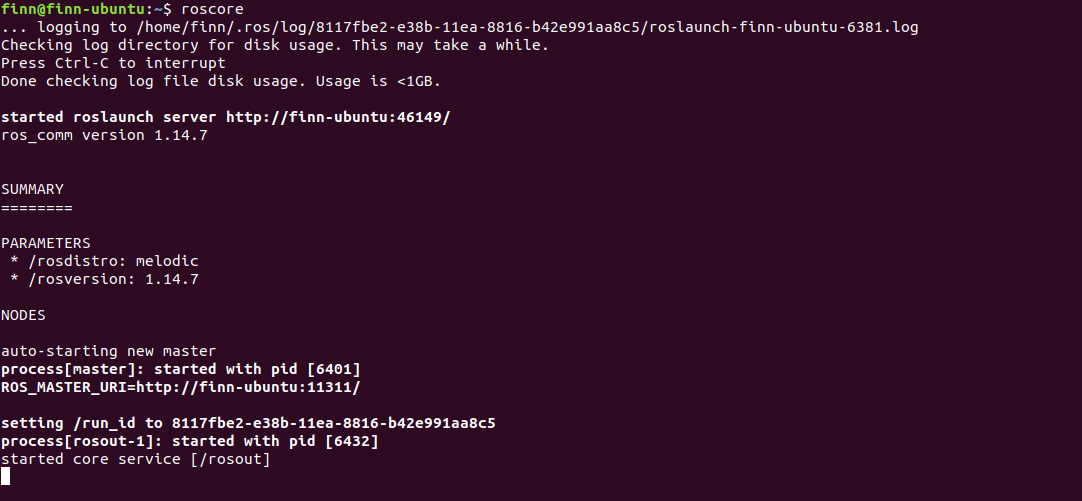

Hence, to begin tackling this problem, the first step is clear: roscore must be running somewhere, since this is a requirement for every ROS based system:

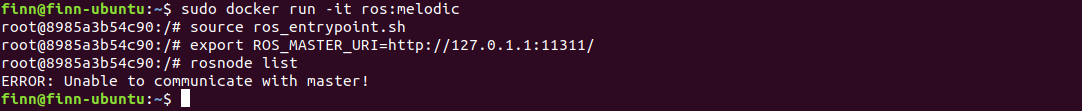

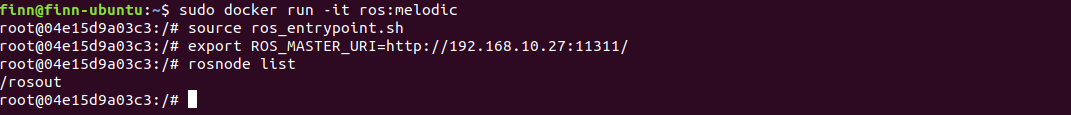

The next, similarly basic, step is to lunch our ROS docker image (in a new terminal), and try to start communicating with the running roscore. Following the instruction’s from [3] again, we run the image and source the entrypoint. To test whether the communication between the external roscore and our docker image works, we use the rosnode list command, which lists all active nodes. Given that roscore is running, there should always, at least, be the /rosout node. However, as we can see, executing these steps yields "ERROR: Unable to communicate with master!"

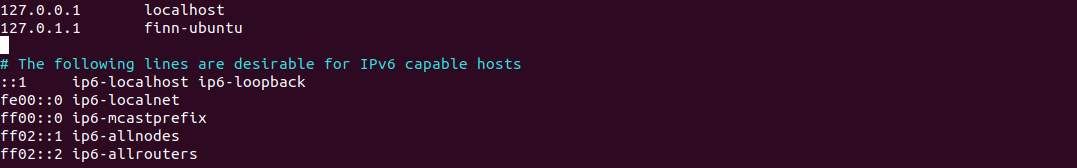

Googling for this specific ROS error message reveals interesting and helpful threads [5], that point us into the right direction. Namely, the key problem is that within our docker container, we don’t find the roscore that is running on the main system. Hence, we need to set the right environment variable (ROS_MASTER_URI [6]), that indicates where to find the running roscore. Luckily enough, the roscore command provides us with that information (consider the output from the roscore command above). In my case, the ROS master is located at http://finn-ubuntu:11311/. A quick look into /etc/hosts reveals the IP we that hides behind the local “finn-ubuntu” hostname:

However, as we can observe below, even setting the right environment variable within the docker container does not appear to solve the issue, we are still left with the same error as before:

So what’s causing this? Are we approaching the error from the wrong side? Did we maybe just have a typo somewhere? And most importantly: How do we fix this and finally access our valuable ROS nodes/topics from within our docker container?

The solution

Actually, the final (working) solution is very close to what we did previously. However, one crucial detail is missing: The fact that docker containers, per default, live in a virtual bridge network [7]! The reason for this is explained in [7]:

In terms of Docker, a bridge network uses a software bridge which allows containers connected to the same bridge network to communicate, while providing isolation from containers which are not connected to that bridge network.

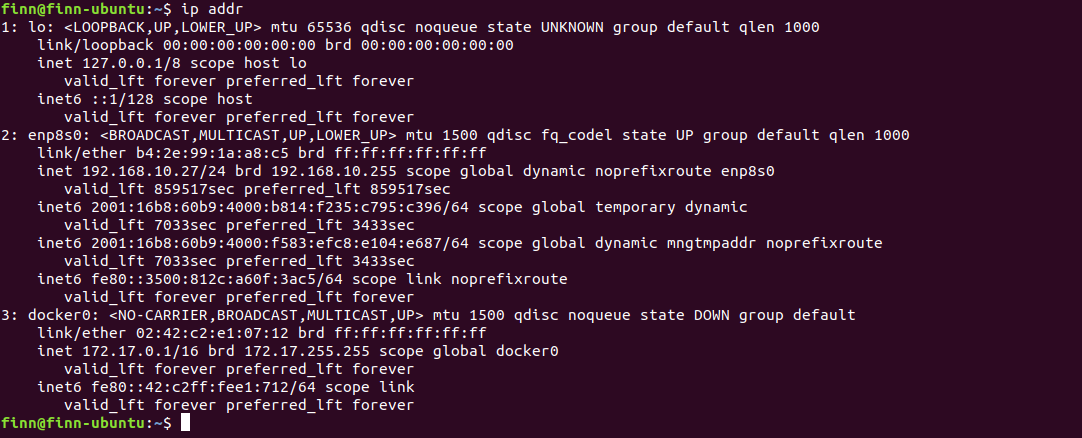

While this is certainly a very powerfull and usefull concept, it is also apparent how this caused our earlier fix to fail. Inspecting the output from ip addr further illustrates this “problem”: We see all the network interfaces that are currently running on our computer. This usually includes at the very least lo loopback device (which function as a local virtual network and runs on 127.0.0.1) and the physical network adapter, usually called en0 or in my case enp8s0.

(Read more about network interfaces [8] and their naming convention [9])

Additionally, we see the docker0 interface. This is the virtual bridge network mention above. Because of this, docker container can communicate with one another, but are isolated from the other host networks, including lo, our loopback device responsible for the local network on our host machine. As we have looked up earler, roscore runs on 127.0.1.1 (i.e. is running locally) and thus not visible from the docker0 bridge.

However, our docker container of course has access to the internet, meaning we can access enp8s0 from within docker. Thus, we can access our host machine via its ipv4 address, which is visible form within our subnet (subnet as in the network that all the devices use that are connected to your router).

Knowing that our roscore runs on port 11311, we again set the environment variable form within our docker container: export ROS_MASTER_URI=http://192.168.10.27:11311/. However this time, instead of using the lo network address (127.0.0.1), we used the enp8s0 ip address of our machine.

And voilà, rosnode list displays the the /rosout node, which proves that communication with the roscore, from within the docker container, works!

The more elegant solution

The above doctrine deducts the solution mirroring my learning experience. However, after identifying the root of the problem and learning about the whole docker networking thing, I now know that there is a much more elegant solution to the problem. Turns out, the docker developers have considered that some people might need to be able to communicate with service running locally on the machine that also hosts/runs docker. For such a scenario, there exists a dedicated network driver, that we can pass to the docker container. This is as simple as passing the following argument to our docker call:

--network host. And that’s is, by adding this argument to the command, you should be able to listen to ros nodes from within you docker containers right away.

Summary

Per default, docker containers run in a virtual bridge network, isolating them from host networks like lo, making the localhost unaccessable. Docker provides a network driver that removes the isolation and makes the loopback device network accessable from within the docker container. This host networking driver can be activated with the --network host command line argument. Alternatively, the ioslation can be circumvented manually by using the en0 network adapter ip address of the host machine (which is accessable within docker container for TCP/IP communication).

Cheers,

Finn.

References:

[1] Simultaneous Localization and Mapping

[2] OpenVSLAM

[3] ROS Docker documentation

[4] Docker ROS image

[5] Unable to communicate with master fix

[6] ROS MASTER URI

[7] Virtual bridge network

[8] ifconfig command in depth

[9] enpXsxX naming convention

[10] Docker networking guide

[11] Docker host network driver